Ran into a problem while attempting to get Python provisioned automatically in Windows. I could install Python as an administrator, but when I would switch into a Limited User Account and attempt to use pip or virtualenv, I’d get nothing but obscure failure.

The key phrase that kept popping up was:

“WindowsError: [Error 183] Cannot create a file when that file already exists: ‘C:\\Documents and Settings\\Administrator\\.distlib'”

as distlib kept trying to put things into the admin account by default.

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

|

C:\provision-windows-master>pip install virtualenv

Traceback (most recent call last):

File "c:\Python27\Scripts\pip-script.py", line 9, in <module>

load_entry_point('pip==1.5', 'console_scripts', 'pip')()

File "build\bdist.win32\egg\pkg_resources.py", line 353, in load_entry_point

File "build\bdist.win32\egg\pkg_resources.py", line 2302, in load_entry_point

File "build\bdist.win32\egg\pkg_resources.py", line 2029, in load

File "C:\Python27\lib\site-packages\pip\__init__.py", line 11, in <module>

from pip.vcs import git, mercurial, subversion, bazaar # noqa

File "C:\Python27\lib\site-packages\pip\vcs\subversion.py", line 4, in <module>

from pip.index import Link

File "C:\Python27\lib\site-packages\pip\index.py", line 16, in <module>

from pip.wheel import Wheel, wheel_ext, wheel_setuptools_support

File "C:\Python27\lib\site-packages\pip\wheel.py", line 23, in <module>

from pip._vendor.distlib.scripts import ScriptMaker

File "C:\Python27\lib\site-packages\pip\_vendor\distlib\scripts.py", line 15, in <module>

from .resources import finder

File "C:\Python27\lib\site-packages\pip\_vendor\distlib\resources.py", line 105, in <module>

cache = Cache()

File "C:\Python27\lib\site-packages\pip\_vendor\distlib\resources.py", line 40, in __init__

base = os.path.join(get_cache_base(), 'resource-cache')

File "C:\Python27\lib\site-packages\pip\_vendor\distlib\util.py", line 602, in get_cache_base

os.makedirs(result)

File "C:\Python27\lib\os.py", line 157, in makedirs

mkdir(name, mode)

WindowsError: [Error 183] Cannot create a file when that file already exists: 'C:\\Documents and Settings\\Strong\\.distlib'

|

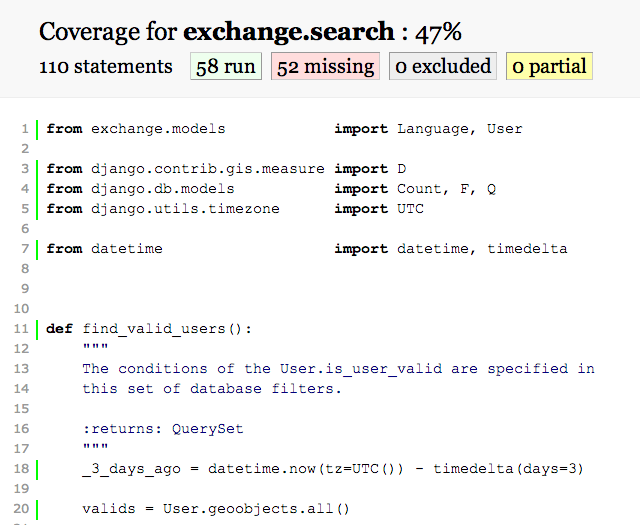

To make sense of the error, I had to dig into the closest code to the WindowsError that was thrown, which looked like this:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

|

def get_cache_base(suffix=None):

"""

Return the default base location for distlib caches. If the directory does

not exist, it is created. Use the suffix provided for the base directory,

and default to '.distlib' if it isn't provided.

On Windows, if LOCALAPPDATA is defined in the environment, then it is

assumed to be a directory, and will be the parent directory of the result.

On POSIX, and on Windows if LOCALAPPDATA is not defined, the user's home

directory - using os.expanduser('~') - will be the parent directory of

the result.

The result is just the directory '.distlib' in the parent directory as

determined above, or with the name specified with ``suffix``.

"""

if suffix is None:

suffix = '.distlib'

if os.name == 'nt' and 'LOCALAPPDATA' in os.environ:

result = os.path.expandvars('$localappdata')

else:

# Assume posix, or old Windows

result = os.path.expanduser('~')

result = os.path.join(result, suffix)

# we use 'isdir' instead of 'exists', because we want to

# fail if there's a file with that name

if not os.path.isdir(result):

os.makedirs(result)

return result

|

Lo and behold, setting the LOCALAPPDATA environment variable to some writeable folder to which the Limited user has acccess will fix the issue. But it strikes me as a place where the os.path.expandvars() call should probably check the APPDATA environment variable too.

And, for whatever odd reason, it seems like the os.path.expanduser() call uses the user credentials associated with the Python executable or something, because at no point as I’m running this code as a Limited User, do I specify that I want the code to act like it is an Administrator. It’s a bit odd.

|

1

2

3

4

5

6

7

8

|

C:\Documents and Settings\Limited\Desktop>set

APPDATA=C:\Documents and Settings\Limited\Application Data

C:\Documents and Settings\Limited\Desktop>set LOCALAPPDATA=%APPDATA%

C:\Documents and Settings\Limited\Desktop>virtualenv venv

New python executable in venv\Scripts\python.exe

Installing setuptools, pip...done.

|

In any case, it works, but the solution is less than obvious.